🚀 @SBERLOGACOMPETE webinar on data science:

👨🔬 Anton Vakhrushev "SketchBoost: Fast Gradient Boosted Decision Tree for Multioutput Problems"

⌚️ Monday 11 December 19.00 (Moscow time)

Add to Google Calendar

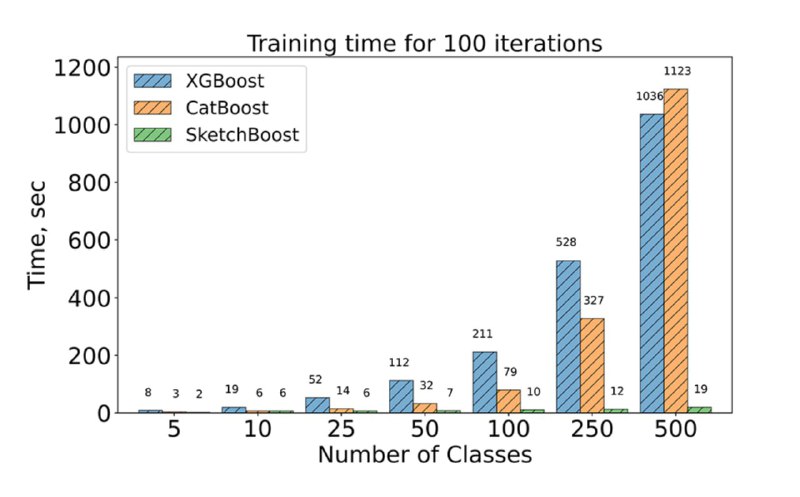

Gradient Boosted Decision Tree (GBDT) is a widely-used machine learning algorithm that has been shown to achieve state-of-the-art results on many standard data science problems. We are interested in its application to multioutput problems when the output is highly multidimensional. Although there are highly effective GBDT implementations, their scalability to such problems is still unsatisfactory. In this paper, we propose novel methods aiming to accelerate the training process of GBDT in the multioutput scenario. The idea behind these methods lies in the approximate computation of a scoring function used to find the best split of decision trees. These methods are implemented in SketchBoost, which itself is integrated into our easily customizable Python-based GPU implementation of GBDT called Py-Boost. Our numerical study demonstrates that SketchBoost speeds up the training process of GBDT by up to over 40 times while achieving comparable or even better performance.

It easy to install: pip install py-boost

It easy to use - see tutorial notebooks: Kaggle Open problems notebook, Tutorial_1_Basics, Tutorial_2_Advanced_multioutput, Tutorial_3_Custom_features

Github

Paper: Iosipoi, Leonid, and Anton Vakhrushev. "SketchBoost: Fast Gradient Boosted Decision Tree for Multioutput Problems." Advances in Neural Information Processing Systems 35 (2022): 25422-25435.

Gold medals on Kaggle: CAFA5 , Open problems - single cell perturbations 2023, Open problems 2022,

Lots of silver/bronze medals in recent Open problems 2023 were based on Pyboost.

Zoom link will be in @sberlogabig just before start. Video records: https://www.youtube.com/c/SciBerloga - subscribe !

📖 Presentation: https://t.me/sberlogacompete/10211, Poster: https://t.me/sberlogacompete/10215

📹 Video: https://youtu.be/5xRxuDh_cGk

👨🔬 Anton Vakhrushev "SketchBoost: Fast Gradient Boosted Decision Tree for Multioutput Problems"

⌚️ Monday 11 December 19.00 (Moscow time)

Add to Google Calendar

Gradient Boosted Decision Tree (GBDT) is a widely-used machine learning algorithm that has been shown to achieve state-of-the-art results on many standard data science problems. We are interested in its application to multioutput problems when the output is highly multidimensional. Although there are highly effective GBDT implementations, their scalability to such problems is still unsatisfactory. In this paper, we propose novel methods aiming to accelerate the training process of GBDT in the multioutput scenario. The idea behind these methods lies in the approximate computation of a scoring function used to find the best split of decision trees. These methods are implemented in SketchBoost, which itself is integrated into our easily customizable Python-based GPU implementation of GBDT called Py-Boost. Our numerical study demonstrates that SketchBoost speeds up the training process of GBDT by up to over 40 times while achieving comparable or even better performance.

It easy to install: pip install py-boost

It easy to use - see tutorial notebooks: Kaggle Open problems notebook, Tutorial_1_Basics, Tutorial_2_Advanced_multioutput, Tutorial_3_Custom_features

Github

Paper: Iosipoi, Leonid, and Anton Vakhrushev. "SketchBoost: Fast Gradient Boosted Decision Tree for Multioutput Problems." Advances in Neural Information Processing Systems 35 (2022): 25422-25435.

Gold medals on Kaggle: CAFA5 , Open problems - single cell perturbations 2023, Open problems 2022,

Lots of silver/bronze medals in recent Open problems 2023 were based on Pyboost.

Zoom link will be in @sberlogabig just before start. Video records: https://www.youtube.com/c/SciBerloga - subscribe !

📖 Presentation: https://t.me/sberlogacompete/10211, Poster: https://t.me/sberlogacompete/10215

📹 Video: https://youtu.be/5xRxuDh_cGk